ZESS shows strong presence at the IEEE SENSORS conference.

Young researchers of the Center for Sensor Systems (ZESS) of the University of Siegen will present six scientific contributions at the flagship conference of the Institute of Electrical and Electronics Engineers (IEEE).

With the development of new disruptive technologies, ranging from sophisticated smartphones to mobile robots and autonomous driving, sensors have become, albeit often unnoticeably, a cornerstone in recent technological advances. Over the last two decades, the Center for Sensor Systems (ZESS) of the University of Siegen has been playing an important role in this regard, contributing to the development of novel sensing principles, signal processing techniques, and high-impact applications.

Thanks to a blend of long-standing local research and transnational projects fostering academic exchange and international mobility of researchers, the ZESS can proudly announce that a new generation of young researchers will be presenting their work at the IEEE SENSORS 2022 conference, in the shape of four scientific papers and two live demonstrators. The flagship conference of the IEEE SENSORS Council, which brings together around a thousand experts in the fields of sensors, will be held in Dallas Texas, USA, from October 30th to November 2nd 2022.

3D sensing with no light emission:

Acquiring the 3D geometry of the environment is of capital importance, e.g., for robot navigation, autonomous driving, or scene reconstruction. Existing 3D sensors, such as "Time-of-Flight" (ToF) cameras or lidar require light emission, which leads to high power consumption. Faisal Ahmed, an Early Stage Researchers (ESR) from the Marie Sklodowska-Curie Innovative Training Network (ITN) "MENELAOSNT" proposes a change of paradigm from competition to collaboration and aims to exploit existing sources of modulated light, such as LiFi lamps, to enable passive ToF 3D imaging.

If bats were not blind…

they could see the stars or any other stationary source of light, which could be used as additional reference for localization. Bats use the "time of flight" of sound pulses to estimate distances. "This nature-inspired principle can be found in sonar, radar, lidar, and ToF cameras, which can "see" distances with light", explains Zhibin Liu, scientific assistant at ZESS, who is using ToF cameras for optical localization of mobile robots in industrial environments and warehouses. "ToF cameras can do both: they can use light sources as localization cues and the bats' ranging method to estimate relative distances" he adds.

Sounds better with light.

Different materials absorb and delay sound waves of different frequencies differently. Nobody would use metal sheets for acoustic isolation! Similar effects are observed with modulated light. "Different materials exhibit different responses to modulated light and ToF cameras allow us measuring this effect per pixel," clarifies Rajababu U. Singh, student of the Master of Mechatronics. The result are material images conveying superior information than conventional pictures.

"Most of the research lines at the group are related to ToF 3D imaging and many of our research outputs are the result of a long-standing collaboration with the top-tech company pmdtechnologies ag", explains Dr. Heredia Conde, who leads the group. "The solid support received over the years not only allows us training tomorrow's researchers, but also witnesses the relevance of our research to industrial players," he continues. "Nevertheless, our presence at this event is not restricted to our local researchers, but includes work from visiting researchers too, such as Adolphe Ndagijimana, an ESR from MENELAOSNT, who pursues his doctoral degree at UPNA, in Pamplona (Spain), and was six months at ZESS working on applying a novel sampling theory to attain single-pixel Terahertz imaging."

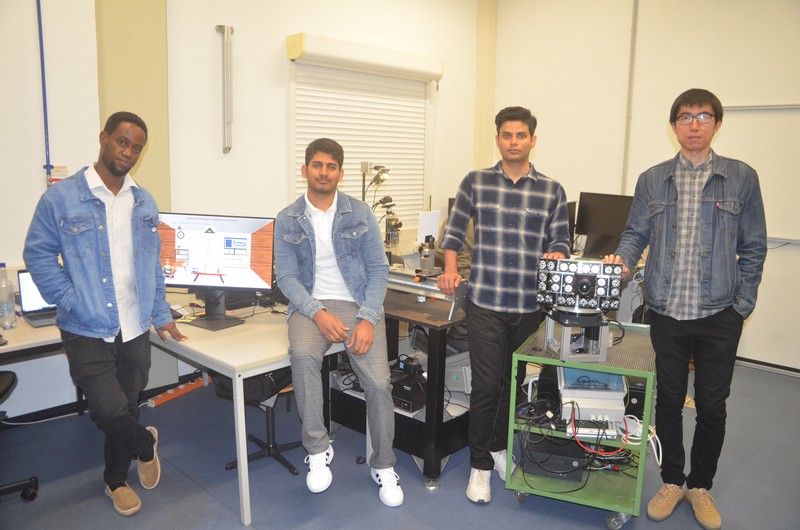

Young scientists in the laboratory of the Center for Sensor Systems (from left): Adolphe Ndagijimana, Faisal Ahmed, Rajababu U. Singh and Zhibin Liu.